更多科大概览

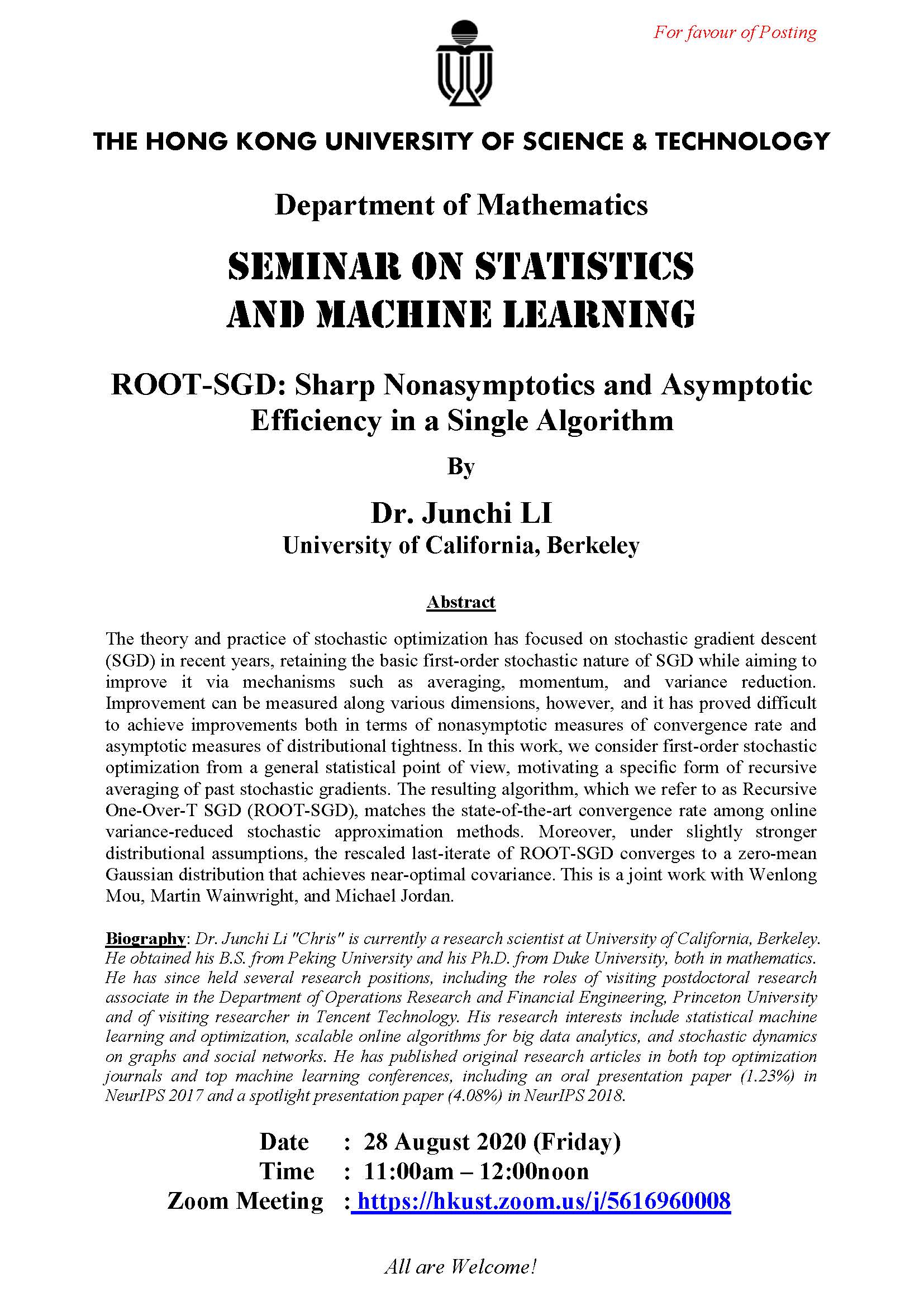

The theory and practice of stochastic optimization has focused on stochastic gradient descent (SGD) in recent years, retaining the basic first-order stochastic nature of SGD while aiming to improve it via mechanisms such as averaging, momentum, and variance reduction. Improvement can be measured along various dimensions, however, and it has proved difficult to achieve improvements both in terms of nonasymptotic measures of convergence rate and asymptotic measures of distributional tightness. In this work, we consider first-order stochastic optimization from a general statistical point of view, motivating a specific form of recursive averaging of past stochastic gradients. The resulting algorithm, which we refer to as Recursive One-Over-T SGD (ROOT-SGD), matches the state-of-the-art convergence rate among online variance-reduced stochastic approximation methods. Moreover, under slightly stronger distributional assumptions, the rescaled last-iterate of ROOT-SGD converges to a zero-mean Gaussian distribution that achieves near-optimal covariance. This is a joint work with Wenlong Mou, Martin Wainwright, and Michael Jordan.

8月28日

11:00am - 12:00pm

地点

https://hkust.zoom.us/j/5616960008

讲者/表演者

Dr. Junchi LI

University of California, Berkeley

University of California, Berkeley

主办单位

Department of Mathematics

联系方法

mathseminar@ust.hk

付款详情

对象

Alumni, Faculty and Staff, PG Students, UG Students

语言

英语

其他活动

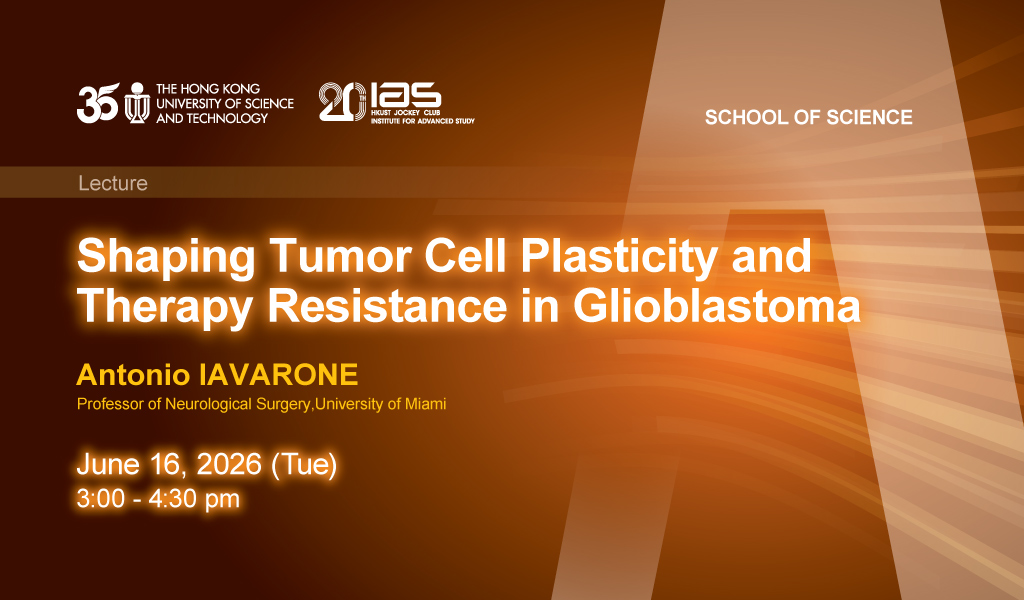

6月16日

研讨会, 演讲, 讲座

IAS / School of Science Joint Lecture - Shaping Tumor Cell Plasticity and Therapy Resistance in Glioblastoma

Abstract

Tumor heterogeneity fueled by plasticity and genetic diversification of cancer cells is key to therapy failure of malignant glioma. The speaker's team implemented spatial and genetic p...

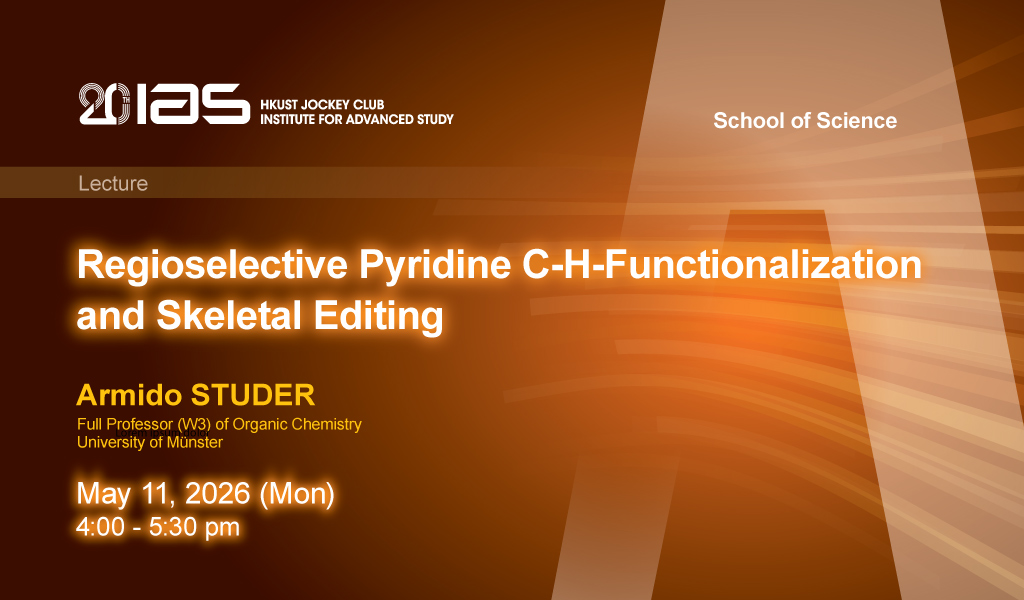

5月11日

研讨会, 演讲, 讲座

IAS / School of Science Joint Lecture - Regioselective Pyridine C-H-Functionalization and Skeletal Editing

Abstract

Pyridines belong to the most abundant heteroarenes in medicinal chemistry and in agrochemical industry. In the lecture, highly regioselective pyridine C-H functionalization through a d...