更多科大概覽

11月18日

CHEM - PhD Student Seminar - AIE Nanoparticles (AIE NPs) for Biological Application”

Student: Mr. Wei HE

Department: Department of Chemistry, HKUST

Supervisor: Professor Benzhong TANG

11月18日

CHEM - PhD Student Seminar - Aggregation-induced White Light Emission

Student: Mr. Xueqian ZHAO

Department: Department of Chemistry, HKUST

Supervisor: Professor Benzhong TANG

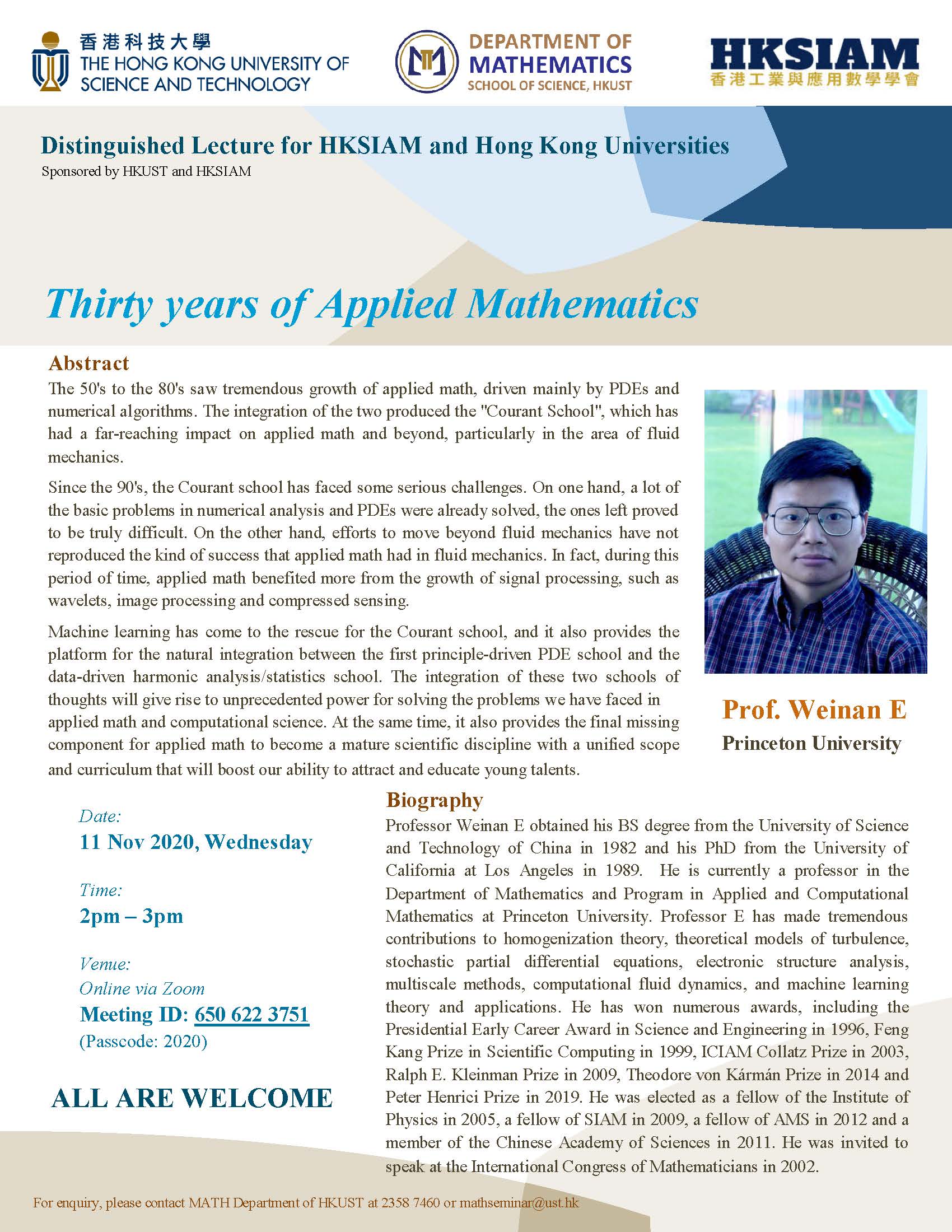

11月11日

研討會, 演講, 講座

MATH - Seminar on Applied Mathematics - Thirty Years of Applied Mathematics

The 50's to the 80's saw tremendous growth of applied math, driven mainly by PDEs and numerical algorithms.

11月9日

研討會, 演講, 講座

SSCI / IAS Nobel Prize Popular Science Lectures: Black Holes - The Darkest and Brightest, Densest and Emptiest, Simplest and Most Mysterious - 2020 Nobel Prize in Physics

Abstract

11月6日

研討會, 演講, 講座

SSCI / IAS Nobel Prize Popular Science Lectures: CRISPR - From Destroying The Intruders to Changing for The Better - 2020 Nobel Prize in Chemistry

Abstract

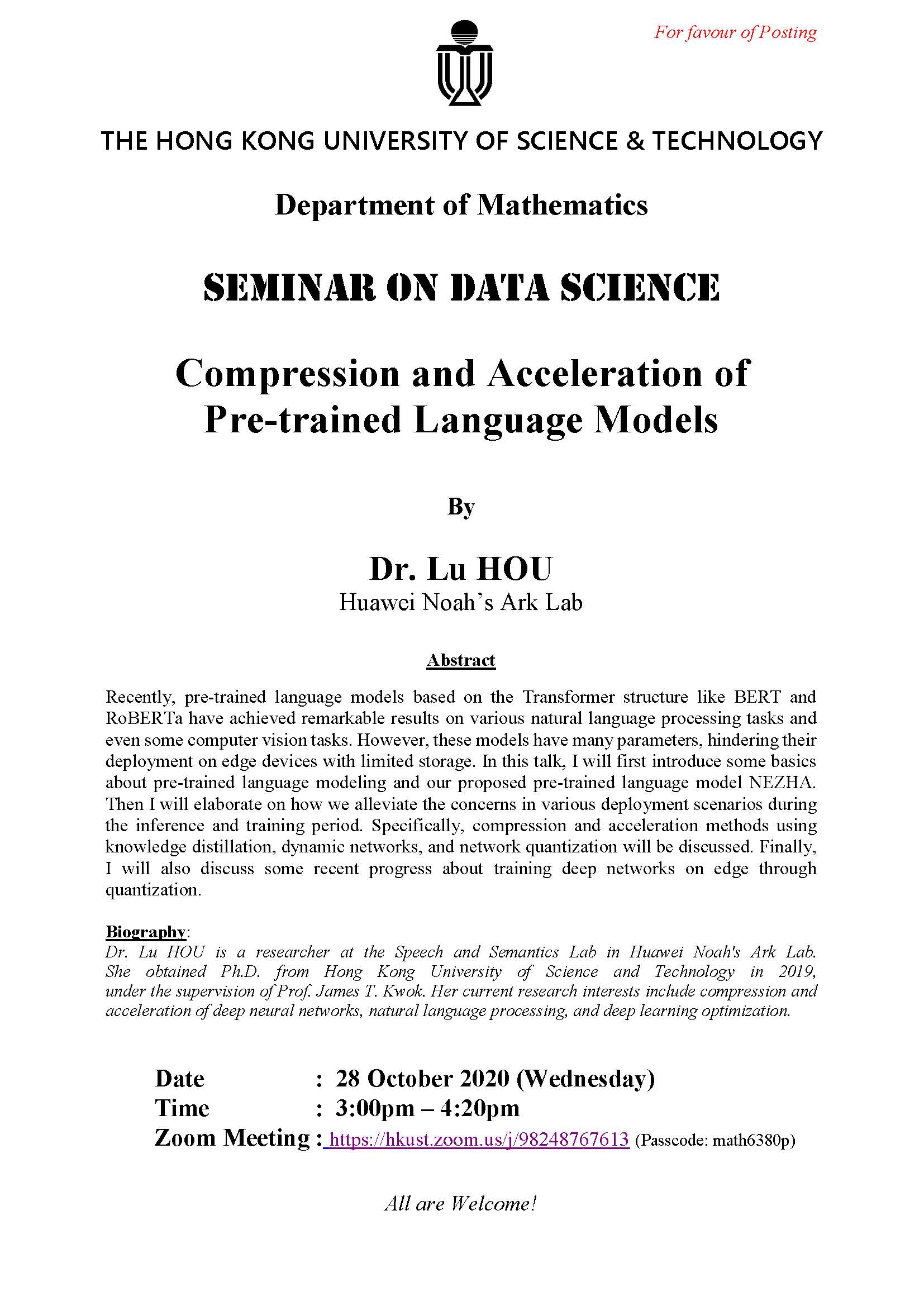

10月28日

研討會, 演講, 講座

MATH - Seminar on Data Science - Compression and Acceleration of Pre-trained Language Models

Recently, pre-trained language models based on the Transformer structure like BERT and RoBERTa have achieved remarkable results on various natural language processing tasks and even some computer vision tasks.

瀏覽理學院過往舉辦的活動。